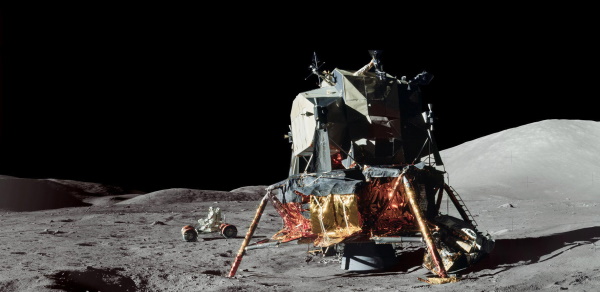

This post will illustrate two things: the amount of time astronauts have spent on the moon, and how to process dates and times in Python.

I was curious how long each Apollo mission spent on the lunar surface, so I looked up the timelines for each mission from NASA. Here’s the timeline for Apollo 11, and you can find the timelines for the other missions by making the obvious change to the URL.

Here are the data on when each Apollo lunar module touched down and when it ascended.

data = [

("Apollo 11", "1969-07-20 20:17:39", "1969-07-21 17:54:00"),

("Apollo 12", "1969-11-19 06:54:36", "1969-11-20 14:25:47"),

("Apollo 14", "1971-02-05 09:18:13", "1971-02-06 18:48:42"),

("Apollo 15", "1971-07-30 22:16:31", "1971-08-02 17:11:23"),

("Apollo 16", "1972-04-21 02:23:35", "1972-04-24 01:25:47"),

("Apollo 17", "1972-12-11 19:54:58", "1972-12-14 22:54:37"),

]

Here’s a first pass at a program to parse the dates and times above and report their differences.

from datetime import datetime, timedelta

def str_to_datetime(string):

return datetime.strptime(string, "%Y-%m-%d %H:%M:%S")

def diff(str1, str2):

return str_to_datetime(str1) - str_to_datetime(str2)

for (mission, touchdown, liftoff) in data:

print(f"{mission} {diff(liftoff, touchdown)}")

This works, but the formatting is unsatisfying.

Apollo 11 21:36:21

Apollo 12 1 day, 7:31:11

Apollo 14 1 day, 9:30:29

Apollo 15 2 days, 18:54:52

Apollo 16 2 days, 23:02:12

Apollo 17 3 days, 2:59:39

It would be easier to scan the output if it were all in hours. So we rewrite our diff function as follows.

def diff(str1, str2):

delta = str_to_datetime(str1) - str_to_datetime(str2)

hours = delta.total_seconds() / 3600

return round(hours, 2)

Now the output is easier to read.

Apollo 11 21.61

Apollo 12 31.52

Apollo 14 33.51

Apollo 15 66.91

Apollo 16 71.04

Apollo 17 74.99

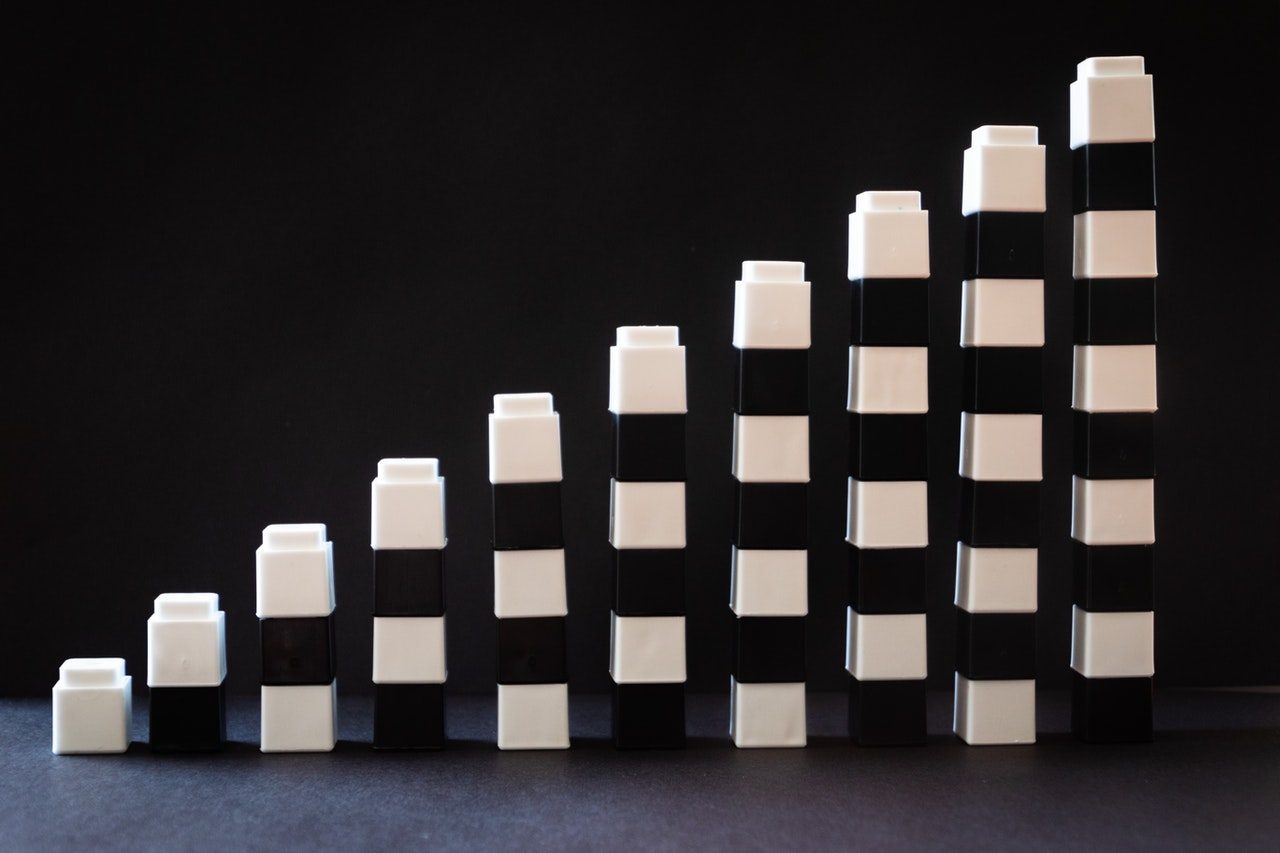

These durations fall into three clusters, corresponding to the Apollo mission types G, H, and J. Apollo 11 was the only G-type mission. Apollo 12, 13, and 14 were H-type, intended to demonstrate a precise landing and explore the lunar surface. (Apollo 13 had to loop around the moon without landing.) The J-type missions were more extensive scientific missions. These missions included a lunar rover (“moon buggy”) to let the astronauts travel further from the landing site. There were no I-type missions; the objectives of the original I-type missions were merged into the J-type missions.

One note about the Python code: subtracting dates works unlike you’d expect, depending on your expectations. The difference between an earlier date and a later date is positive. You might expect that when speaking of dates informally. But if you think of the difference in dates as subtracting the number of seconds from some epoch to a that date, you’d expect the opposite sign.

Incidentally, UNIX systems store times as seconds since 1970-01-01 00:00:00. That means the first two lunar landings were at negative times and the last four were at positive times. More on UNIX time here.

Related posts

- The orbit that put men on the moon

- Kalman filters

- The weight of code

- Team Moon

- Duct tape on the moon

from Planet Python

via read more